AI systems increasingly embed our values into everyday tools. Yet transparency, accountability, and governance struggle to keep pace with capability. The challenge is translating ethics into code while preserving autonomy and avoiding bias. Power and responsibility must be mapped clearly, with safeguards that adapt as tech evolves. A careful path blends prudent experimentation with vigilant oversight, guiding innovation toward humane ends. The question lingers: who answers when things go wrong, and how do we stay humane in the process?

What AI Ethics Really Means for Everyday Tech Use

AI ethics informs everyday technology by asking what responsibility accompanies its use, determining how design choices affect privacy, fairness, and autonomy.

The topic remains a cautious mirror: devices reflect values as systems ripple through daily life.

A reflective stance notes bias masquerade and transparency fractures, urging vigilance, humility, and principled restraint to preserve individual freedom while guiding evolving innovation.

How We Decode Values Into Algorithms

From the everyday concerns raised in AI ethics, the next step is to examine how values are translated into the codes and models that run our systems. This reflection considers decode values within algorithm design, data governance, and bias mitigation; it emphasizes transparent intent, limited harm, and accountability. A cautious stance recognizes trade-offs, inviting freedom through principled, verifiable design choices.

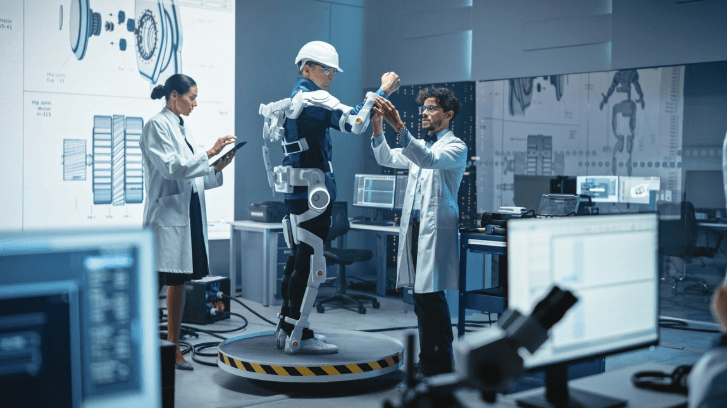

Who Holds Power in AI Decisions and Why It Matters

Power in AI decisions rests at the intersection of governance, expertise, and responsibility, and understanding who holds it clarifies who bears accountability when outcomes matter. In this frame, power dynamics shape what is pursued, who bears risk, and where trust resides. Clear accountability mechanisms anchor ethics, while vigilance ensures freedoms endure amid rapid capability growth and collective responsibility.

See also: AI Driving Financial Innovation

Building Safeguards That Scale With Capability

Safeguards must evolve in step with capabilities, ensuring checks and balances keep pace with rapid advancement. The discourse embodies precaution, not paralysis, proposing flexible, scalable controls aligned with human values. Privacy guardrails protect personal space and data sovereignty, while value alignment anchors goals to ethical priorities. A detached lens assesses risk, transparency, and accountability, inviting prudent experimentation without surrendering freedom or responsibility.

Frequently Asked Questions

How Do We Measure True AI Accountability Across Industries?

Assessing true AI accountability across industries requires ongoing privacy auditing and robust governance frameworks, balancing innovation with caution. A reflective observer notes that transparent standards empower freedom while imposing principled checks to prevent harm and ensure responsible deployment.

Can AI Systems Ever Be Truly Free of Bias?

Can AI systems ever be truly free of bias? They are perpetually evolving, prompting cautious reflection on bias mitigation and fairness evaluation, as observers seek principled safeguards that honor freedom while acknowledging imperfect, continuous improvement in machine cognition.

What License or Oversight Should Govern AI Research?

A balanced license framework and robust oversight regimes should govern AI research, guiding biodiversity impacts and transparency. This reflective approach favors principled algorithmic governance, allowing freedom while ensuring accountability, caution, and shared responsibility within adaptable, globally coordinated license frameworks.

Who Bears Liability When AI Causes Harm?

Harm liability rests with operators and deploying institutions, while accountability metrics illuminate responsibility; a precautionary framework anchors freedom, ensuring transparent risk assessment, fault attribution, and continuous oversight to deter negligence and safeguard stakeholders across autonomous systems.

Will AI Supremacy Undermine Human Autonomy and Choice?

AI autonomy may threaten human agency if unchecked, yet prudent safeguards can preserve freedom; the concern rests in design, governance, and accountability. The principle remains: safeguard AI autonomy while protecting human agency and individual liberty.

Conclusion

AI ethics guiding everyday tech demands humility and vigilance. As capabilities grow, governance must keep pace, translating values into verifiable design choices and clear accountability. An anticipated objection—“controls will stifle innovation”—is countered by evidence that prudent safeguards, transparency, and iterative testing actually enable trustworthy progress. When power is openly mapped, and safeguards scale with capability, innovation serves humanity. The path is cautious, principled, and resilient, ensuring autonomy and dignity remain central as we shape our collective digital future.